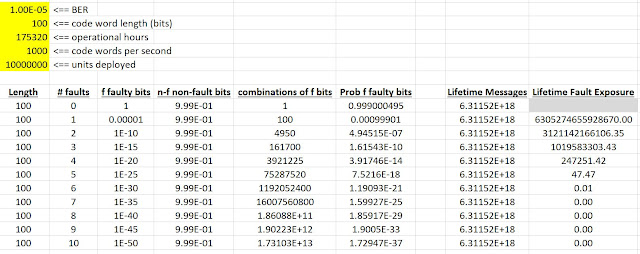

I'm thinking about why a Hamming Distance of 6 for network checksums/CRCs is so often found. Certainly that is designed into many network protocols, but sometimes I like to ask the question as to whether folk wisdom makes sense. So I did a trial calculation:

- 100 bit messages

- 1000 messages/second

- 20 year operational life

- 10 million deployed units

- 1e-5 bit error ratio

I got 47.47 expected arrivals of 5-bit corrupted messages (random independent bisymmetric "bit flip" fault model at BER), and probability of only 1% of any 6-bit corrupted messages. So that seems to justify HD=6 as being reasonable for this example system, which looks to me like a CAN network with low-cost network driver circuits on a car fleet. (Even 10x fleet size still works out as more likely than not there will be no 6-bit faults.) The safety argument here would simply be that there will never be an undetected network message fault for this fault model.

Did I miss something? (Did I get my math wrong :) ?) Does anyone know of a place where HD=6 is justified in a different way other than by folklore? So much of checksums and CRCs risks being lost in folklore as the grey beards retire. I'm trying to capture some of this type of thinking for posterity before it is my turn to retire...

In my experience 1e-5 BER is a typical conservative number for an embedded network. That means about 1% of network messages are corrupted. More than that and it's going to be difficult to get a working system. Less than that and there is economic pressure to use cheaper cabling with worse BER. But this is a rule-of-thumb approximation. You should do the calculation for your own network. For IT networks BER is dramatically lower, but they tend to operate in a much more benign environment and have budget for better network hardware.

Wolfram Alpha computation for those who'd like to check my work (you might need to click on "approximate solution" to get the actual number):

Excel source: https://archive.org/details/hd-worksheet