An Improved Modular Addition Checksum Algorithm

Philip Koopman

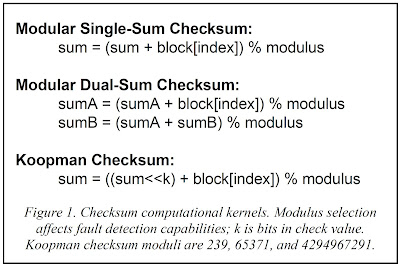

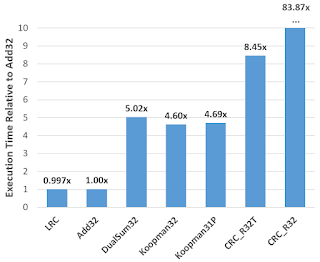

This paper introduces a checksum algorithm that provides a new point in the performance/complexity/effectiveness checksum tradeoff space. It has better fault detection properties than single-sum and dual-sum modular addition checksums. It is also simpler to compute efficiently than a cyclic redundancy check (CRC) due to exploiting commonly available hardware and programming language support for unsigned integer division. The key idea is to compute a single running sum, but introduce a left shift by the size (in bits) of the modulus before performing the modular reduction after each addition step. This approach provides a Hamming Distance of 3 for longer data word lengths than dual-sum approaches such as the Fletcher checksum. Moreover, it provides this capability using a single running sum that is only twice the size of the final computed check value, while providing fault detection capabilities even better than large-block variants of dual-sum approaches that require larger division operations. A variant that includes a parity bit achieves Hamming Distance 4 for about half the data word length as the baseline version for the same size check value.

Full paper here: https://arxiv.org/abs/2304.13496